Computer vira in general, and the technically impressive Stuxnet virus* in particular, provide important lessons interface designers should take to heart - instead of following the design guidelines of spammers.

The interface lesson of spam is as follows

Throw a lot of shit out there, and the users will self-selectSpam is sent to a generous multiple of the users it actually works for, in the sense that they react to it. The reason this works is that the spammer does not pay for our attention.

The interface lesson of computer vira, on the other hand, is the converse

Stay low, stay out of sight, until you have a high chance of successIf a virus is very obnoxious, disinterested users are quickly annoyed by it, and inoculation - in the form of signature files for antivirus software - quickly develops. Stuxnet is an extreme example of this, going to great lengths to stay out of the way except where the intended target - Iranian nuclear facilities controlled by specific Siemens industrial control software - was found.

Your software is better if it operates on a virus theory of interface design, than a spam theory of interface design. Err on the side of no interaction, if you're not sure the interaction will be useful.

* it's a worm. I know. To the normals, everything is a "virus".

When I've been doing stuff with Morten, we've always had a basic dividing line in how we are interested in things, which has been very useful in dividing the work and making sure we covered the idea properly. Morten is intensely interested in use, and I'm intensely interested in capabilities and potentials.

Any good idea needs both kinds of attention, so it's good to work with someone else who can really challenge your own perspective. If only we had a third friend who was intensely interested in making money we could have really made something of it. It's never too late, I suppose.

Anyway, my part of the bargain is capabilities. Yesterday evening, and this morning, I added another year worth of lifetime to my aging* Android phone, one of the original Google G1 development phones.

It's a slow, old device compared to the current android phones. Yesterday, however, by installing Cyanogenmod on the phone, I upgraded to Android 2.2 - Froyo - and boy, that's a lot of capability I just added to the phone**.

First, about the lifetime: Froyo has a JIT, an accelerator if you're not a technical person, which makes it possible for my aging phone to keep up with more demanding applications, expecting better hardware.

Secondly, Froyo is supported by the Processing environment, for experimental programming, so now I can build experimental touch interfaces in minutes, using the convenience of Processing. This makes both Processing and the phone infinitely more useful.

Thirdly, Froyo has a really nice "use your phone as an Access Point"-app for sharing your 3G connection over WiFi***. I had a hacked one for Android 1.6 as well and occasionally this is just a really nice appliance to have in a roomfull of people bereft of internet.

Fourth, considering that Chrome To Phone is just an easy demo of the cloud services behind Froyo it sure feels revolutionary. Can't wait to see people maxing out this particular capability.

Fifth, and it feels silly to have to mention this, but Froyo is the first smartphone HW/SW combo you can add storage in a reasonable way, i.e. moving apps + everything to basically unlimited replaceable storage.

On top of all the conveniences of not being locked down, easy access to the file system, easy backup of text messages and call logs; this feels like a nice edition of Android to plateau on for a while. If the next year or so is more about hardware, new hardware formats, like tablets, and just polishing the experience using all of these new capabilities, I think that'll work out great.

* (1.5 yrs old; yes it sucks that this is 'old'. We need to do better with equipment lifetimes)

** I'm going to but a couple of detailed howto posts on the hacks blog over the next couple of days, so you can do the same thing.

*** For Cyanogenmod, you need this.

I got into the Wave preview, and first things first: This really is a beta. The bugs. It is full of them. It is a dangerous move, lots of people aren't very good at looking at unfinished things, but I personally appreciate the opportunity.

Second thing: You can't really evaluate something that involves communication before you're actually trying it out for communication that you really want to be doing. Trying out Wave without a real need or purpose - because I can't invite the people I usually need to talk to - makes me question any and all user testing of software that isn't done with real paying customers.

That being said, even on the features, it seems the experience is a rewrite or two from being generally useful. Which is not a problem. GMail, famously, was redone 6 times for experience. Wave still feels like a lot of the interface has not been sandpapered down to proper size. I'm even unsure about the basic interface metaphor.

After seeing the original Wave demo, and reading the whitepapers, I wrote about how the project hit all the right marks in terms of openness and technology, and I still think the underlying tech - choice of Problem, Platform and Policy - is incredibly right. It's just that its right for a lot of different experiences and the one we're looking at now isn't polished yet, and doesn't really show off all the 3Ps can handle.

So what could it do and what does it do and what does and does not work?

What the Wave technology does underneath the experience is real time, collaborative, federated, versioned, editing of XML

- Real time: Changes are transmitted live as they happen

- Collaborative: Many users can edit the same resources

- Federated: You can set up your own server and collaborate with people on Google's servers - no silos

- Versioned: There's full edit history

- XML: It's structured, so you can do database-y object-y stuff

That is an almost complete list of things you can ask for in shared action on the internet.

But of course it is not an experience.

The Wave UI seems to have stayed pretty close to the tech-description above. The experience is "structured, live, collaborative writing". Examples of what it isn't:

- "Lifted" email (like GMail was).

- "Lifted" chat (not sure anybody does that).

- A way for me to communicate with non-Wave users

What I was hoping was maybe that it felt like the ideal final resting place for my GMail and my GTalk archives, as well as a new way to collaborate, but I'm not sure these experiences can all fit into the same UI. For starters, the ability to edit is irrelevant, if not downright odd, for chat - our brains can't do it, so it seems odd that the interface can.

However, we could just have a couple of different experiences - much like I chat in Adium, but love the archive in GMail. That integration is incomplete - in that I can't restart the chart later. Once I'm in GMail I have moved on. There's no Adium friendly API into the chat archives. With Wave I could start a conversation with reference in a previous conversation - which could be a great interface. Also, the ability to fork conversations, could be made very nice as an experience.

When I started writing, I wanted to include my list of observations of odd things in Wave (desktop metaphor seems wrong for this, wave/contact/tool organization takes up too much screen compared to content, muting vs archiving hard to understand, 99 changes in a wave impossible to understand, bots vs gadgets what does what? why is there a 'display' and an 'edit' mode?) as well as what seems like bugs - but I think I'll do that separately later in a better format.

So, yesterday afternoon, Morten suggested it would be cool if there was a site that could score the days at Roskilde against personal preferences as expressed through Last.fm.

Indeed it would be, and since Morten does nice minimal interfaces and I do data gathering and mixing, we agreed to split the work, and build the Best day at Roskilde-finder.

It's worthwhile to have a look at what infrastructure we have used for this and which situational hacks are involved. I didn't have to scrape the concert program myself, as Steffen had already done that, through Yahoo's YQL.

What I needed to do is mine Last.fm's API for relevancy for those bands to merge with the user's favorite bands.

Present that to Morten's website as simply as possible and let Morten make a useful interface for the data.

It doesn't quite end there, though. Morten had previously exploited live play information from Danish National Radio to create a radio station persona on Last.fm.

Through Spotify, using Spotify's Last.fm integration he is also building a Roskilde Festival persona.

- these will give more general than personal answers: "If you're the kind of person listening to this radio station you will like".

It's interesting how much infrastructure is available - and useful - for a mashup like this.

We're using Yahoo, Last.fm, Danish Radio's website, Roskilde's website and Spotify as data sources/web services - and combining preexisting situational hacks from 3 people, on top of the obvious webservers and direct hacking.

These resources can be combined, and hidden away, in less than 10 hours to produce a coherent, simple and fun website.

Add instant distribution through Facebook and Twitter (Facebook wins) and there's a nice useful bit of mashup for an intended audience of 200-10000 people.

Netbooks, so far, haven't really been interesting. They are cheap - and of course that's interesting in and of itself - but they don't really change what you can do in the world. Their battery life, shape, weight and notably software have been much the same as expensive laptops, just with a little less in the value bundle. Which is perfectly fine for 90% of laptop uses.

That's set to change, though. New software, assuming the network, and consumer packaged for simplicity, sociality and "cultural computing" more than "admin and creation" style computing is just about to surface. Fitted with an app-store and simplified, the netbook assumes more an appliance role than a general purpose computing role.

The hardware vendors are adapting to that idea also; moving towards ultra low power consumption and enough battery life that you simply stop thinking about the battery.

Meanwhile, Microsoft is busy squandering this opportunity. They simply don't get this type of environment, apparently - and are intent on office-ifying and desktop-ifying the metaphor. Where Bill Gates "a computer on every desk" was once a vision of not having computing only in corporations and server parks it is now severely limiting. Why do I need a desk to have a computer?

I thought Bing vs Wave makes an interesting comparison. Bing is a rebranding of completely generic search; absolutely nothing new. Not a single feature in the presentation video does anything I don't already have. And yet it's presented in classic Microsoft form as if it was something new and as if these unoriginal product ideas sprang from Microsoft by immaculate conception.

Contrast that to Google Wave, which - if it does something wrong - is overreaching more than underwhelming. And contrast also Wave's internet-born and internet-ready presentation and launch conditions. It's built on an open platform (XMPP aka Jabber). The Wave whitepapers gladly acknowledge the inspiration from research on collaborative creation elsewhere. The protocol is published. A reference implementation will be open sourced. The hosted Wave service will federate. It is a concern for Google (mentioned in presentations) to give third parties equal access to the plugin system - the company acknowledges that internally grown stuff has an initial advantage and is concerned with leveling the playing field.

Does Microsoft have the culture and the skills to make the same kind of move? I'm not suggesting that there's an evil vs nonevil thing here - obviously Google wins by owning important infrastructure - but just that the style of invention in Wave, based on other people's standards and given away so others can again innovate on top of it, seems completely at odds with Microsoft's notion of how you own the stuff you own.

So Wolfram Alpha - much talked about Google killer - is out. It's not really a Google killer - it's more like an oversexed version of the Google Calculator - good to deal with a curated set of questions.

The cooked examples on the site often look great of course, there's stuff you would expect from Mathematica - maths and some physics, but my first hour or two with the service yielded very few answers corresponding to the tasks I set my self.

I figured that one of the strengths in the system was that it has data not pages, so I started asking for population growth by country - did not work. Looking up GDP Denmark historical works but presents meaningless statistics - like a bad college student with a calculator, averaging stuff that should not be averaged. A GDP time series is a growth curve. Mean is meaningless.

Google needs an extra click to get there - but the end result is better.

I tried life expectancy, again I could only compare a few countries - and again, statistics I didn't ask for dominate.

Let's do a head to head, by doing some stuff Google Calculator was built for - unit conversion. 4 feet in meters helpfully over shares and gives me the answer in "rack units" as well. Change the scale to 400 feet and you get the answer in multiples of Noah's Ark (!) + a small compendium of facts from your physics compendium...

OK - enough with the time series and calculator stuff, let's try for just one number lookup: Rain in Sahara. Sadly Wolfram has made a decision: Rain and Sahara are both movie titles, so this must be about movies. Let's compare with Google. This is one of those cases where people would look at the Google answer and conclude we need a real database. The Google page gives a relief organisation that uses "rain in sahara" poetically, to mean relief - and a Swiss rockband - but as we saw Wolfram sadly concluded that Rain + Sahara are movies, so no database help there.

I try to correct my search strategy to how much rain in sahara which fails hilariously by informing me that no, the movie "Rain" is not part of the movie Sahara. Same approach on Google works well.

I begin to see the problem. Wolfram Alpha seems locked in a genius trap, supposing that we are looking for The Answer and that there is one, and that the problem at hand is to deliver The Answer and nothing else. That model of knowledge is just wrong, as the Sahara case demonstrates.

The over sharing (length in Noah's Ark units) when The Answer is at hand doesn't help either, even if it is good nerdy entertainment.

Final task: major cities in Denmark. The answer: We don't know The Answer for that - we have "some answers" but not The Answer, so we're not going to tell you anything at all.

Very few questions are really formulas to compute an answer. And that's what Wolfram Alpha is: A calculator of Answers.

This project is so right. Replace Processing's own language with as intuitive, but very powerful, Scala and you have the immediacy of processing with some really serious legs for later abstraction.

I even think Scala has standard Processing beat as far as intuition goes. No void. No brackets when there are no parameters and so on. Better expression/ascii ration, quite simply.

Ubicomp er den gamle drøm om beregning i alting - og her er et virkelig godt slideshow, der diskuterer om vi ikke har fået det allerede uden at lægge mærke til det - i iPods og snedige telefoner og uventede remixes af virkelighedsdata med webdata. Man får virkelig aktiveret tankerne her.

When we built Imity - bluetooth autodetecting social network for your cell phone - we did - of course - get the occasional "big brother"-y comment about how we were building the surveillance society. We were always very careful to not frame the application as being about that, careful with the language, hoping to foster a culture that didn't approach the service on those terms. We never got the traction to see whether our cultural setup was sufficient to keep the use on the terms we wanted, but it was still important to have the right cultural idea about what the technology was for, to curb the most paranoid thinking about potentials.

It's simply not a reasonable thing to ask of new technology, that it should be harm-proof. Nothing worthwhile is. Cars aren't. Knives aren't. Why would high-tech ever be. And just where in the narrative of some future disaster does the backtracking to find the harm end? Computers and the internet are routinely blamed for all kinds of wrongdoing, whereas the clothing, roads, vehicles and other pre-digital artifacts surrounding something bad routinely are not.

What matters is the culture of use around the technology, whether there is a culture of reasonable use or just a culture of unreasonable use. And you simply cannot infer the culture from the technology. Culture does not grow from the technology. It just does not work that way.

I think a lot of the internet disbelief wrt. to The Pirate Bay verdicts comes from basically missing this point. "But then Google is infringing as well" floats around. But the important thing here is that Pirate Bay is largely a culture of sharing illegally copied content whereas Google is largely a culture of finding information.

I think it's important to keep culture in mind - because that in turn sets technology free to grow. We can't blame technology for any potential future harm; we'll just have to not do harm with it in the future - but the flip side of course is that responsibility remains with us.

I haven't read the verdict, but the post verdict press conference focused squarely on organization, behaviour and economics of what actual crossed the Pirate Bay search engine, which seems sound.

- that being said, copyright owners are still squandering the digital opportunity by not coming up with new ways of distribution better suited for the digital world, but the internet response wrt. The Pirate Bay that they just couldn't be quilty, for technological reasons, does not really seem solid to me, if we are to reason in a healthy manner about technology and society at all.

The What You Want-Web got a number of power boosts this week.

- iTunes, the online music hegemony, is now fully DRM-free. Differentiated pricing so far means mostly a price bump to $1.29 (says reports), but have you seen App Store prices lately? iTunes is now thoroughly lock-in free.

- Spotify, the most disruptive challenge to the above hegemony, launched an API, the only plausible response to the open source despotify library that surfaced a little while ago. It is Linux only so far, but that has to be a temporary condition. Spotify still has DRM and probably needs a different level of bargaining power to make the argument that it does not matter anymore.

- Google App Engine announced Java support, and not just Java, but full JVM support. This essentially announces Javascript, Groovy, Scala support as well (one would expect Lift soon), and - as important as more languages - better DB interop. Specific tools for importing and exporting data from the App Engine.

The What-You-Want Web is my just-coined phrase for the lock-in free, non-value-bundled, disintermediated, higly competitive computation, api, and experience fabric one could hope the web is evolving towards. Twitter already lives there, nice to see some more people join.

The important thing about all of these announcements is that they forgo a number of options for making money off free/cheap: Lowering the friction towards zero means the services have to succeed on their own merits. If they fail to offer what I need or want, I can just leave. I don't have to buy into the platform promise of any of these tools, I can just get the stuff that has value to me.

I think in 5 years we will remember Twitter largely as the first radically open company on the web. Considering the high availability search and good APIs, there literally is no aspect of your life on Twitter that you can't take with you.

P.S. (Also, three cheers for Polarrose, launching flickr/facebook-face recognition today. A company adding decisive value with unique technology, born to take advantage of the WYW-Web.)

Pretty good overview of what's wrong with URL shorteners. They destroy the link space, adds brittle infrastructure run by who knows who. We already know that the real value proposition is traffic measurement - i.e. selling your privacy short.

The problem of course is the obvious utility of shorteners.

This is all new stuff, the current state of the art is not how it is going to end.

Kunstig Intelligens er som regel noget med elegante søgealgoritmer. Man har en masse data, og vil gerne vide noget om dem, og så bruger man en af en række af elegante søgealgoritmer; der er forskellige snit - brute force, optimale gæt, tilfældige gæt. Inde i kernen af sådan en algoritme ligger der en test, der viser om man har fundet det man ledte efter.

Det er jo enkelt nok at forstå, når man ikke bare kigger på det magiske resultat. Mere punket bliver det, når testen der er kernen i algoritmen, udføres af en laboratorierobot. Altså af en rigtig fysiske maskine, der arbejder med rigtig fysiske biologiske systemer i laboratoriet.

Sådanne maskiner findes faktisk, ihvertfald en af dem. Og den har lige haft et gennembrud og isoleret et sæt gener, der kodede for et enzym, man ikke kendte den genetiske kilde til.

Wiredartiklen har mange flere detaljer, hvad der gør det ekstra trist at vide at Wired Online lige er blevet skåret drastisk ned af en sparekniv.

Listen to this, as the frequency goes up, splits into multiple tones, and then turns into chaos, briefly reintegrates, and then turns back into chaos. You might also like this version, where I've simplified to pure semi tones (i.e. the keys on a piano).

[UPDATE: New personal favourite - in C major - much more dramatic.]

The logistic map is probably the simplest and most celebrated math lab example of chaos.

It's a pretty simple function f. There's a control parameter r. When you take a number, say 0.5 and compute f(0.5) and then f(f(0.5)) and so on, interesting things happen. When the parameter r is low, you quickly end up at a fixed value, some point p where f(p) = p, so the iteration just stays there. When you increase r however, a lot of stuff happens - first a split, so the iteration flip flops between two values, and then that happens again into four values and so on. Above a certain value of r you reach chaos. This famous image shows the fixed points and chaos of the iteration for values of r.

The image however is static - you don't get a feel for how the dynamics of the iteration hops around on the image.

I was curious how that sounds, so I made this Pure Data patch and took a slow slide up the chaos scale. The result is above.

Her er en nydelig, teknologifri, produktintroduktion. Det er appetitligt og imødekommende - problemet er bare at det løfte produktet giver er et jeg har hørt, og set svigtet, dusinvis af gange. Alting fra Microsofts "Information at your fingertips" til tagclouds deler løftet. Og det holder aldrig helt. De bedste skud nogensinde på at holde det løfte er Google og Wikipedia.

Den abstrakte venskabelighed kommer simpelthen i vejen; en enkelt konkret succes i videoen havde nok solgt det bedre.

Sensing in the iPhone, Radiohead 3D data and a little hacking, and you have Thom Yorke doing his best Leia-Hologram impersonation in the air above an iPhone.

Polarrose har fået nyt look og ansigtssøgemaskine. Her er f.eks. Abraham Lincoln i mange udgaver. Tallet over hvert ansigt dækker over mange steder hvor det samme billede er fundet, så en nejs feature er altså at man så enkelt som muligt ser forskellige billeder af en person.

My old amazon hack to collaboratively filter on amazon.com, but purchase within the EU from amazon.co.uk had gone staie but is now fixed: Find it on amazon.co.uk (usage: Drag to toolbar, click when on a single book page on amazon).

Amazon has gone to "meaningful URLs" - except not for machines so much, without changing. Screenscraping and its bookmarklet cousin has always been brittle.

On the Second Life blog Jim Purbrick riffs on a session we had at EuroFOO about mixing the real world and Second Life. What Jim has done - and what was the onset of the discussion - and what we've done a little bit of at Imity - is prototyping data-enriched physical worlds - augmented reality - in Second Life where everything works and you don't have to mess about with the physical shortcomings of Bluetooth or RFID scans. We talked a little bit about this in the context of CO2 accounting - modeling high fidelity CO2 accounting inside Second Life, giving you a perfect CO2 history of every simulated object in SL. The logical conclusion - all the more relevant since the recent interest in Second Life ecology, was to do actual CO2 accounting for Second Life inside the simulated world. But this probably is some ways off.

By no means have we given up on the idea of doing nice Imity/Second Life crossovers by the way. There's just the problem of time.

Also, note how this very nice idea is completely immune to the SL hype discussion.

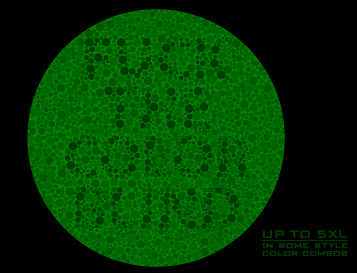

Through a thread here (in danish) I found this T-shirt. I'm actually slightly red/green challenged so while I could tell that there was a text there I couldn't tell what it said. (I can tell the colors apart easily but some of the tests designed to trip people like me up do trip me up). Asking a colleague produced no result (more danish) - well actually it did, he's just telling the better story where it doesn't - so I did the reasonable thing and wrote a perl script to color separate the image so I could tell what it said.

The color separation also shows how the trip up actually works. Above is the red, green and blue components of the image respectively. Notice how the blue component is just noise as far as the test goes. The red has more signal in the letters and the green has less signal inside the letters. One can see how the obfuscation works when you have trouble distinguishing red from green: The reduced green signal is matched by an increased red signal, but if you can't tell them apart this just cancels to noise.

Presumably, if the lowered green matched the heightened red exactly I wouldn't even know I was missing out on something if it weren't for the social clue in "this looks like one of those colortests".

The blue component has absolutely no effect on the readability of the text (for me, that is). This image, which is the red and green without the blue, is more or less as unreadable as the original (i.e. I can tell there's something to miss, but not what I'm missing).

The code I used for the separation can be found here.

Here's a totally obvious fact not much talked about: Of course virus writers test against popular anti-virus packages before 'releasing'. They want the virus to work after all.

Not exactly for the same reasons, but I assume the story is equally obvious if you replace 'virus writers' with 'bacteria' and 'popular anti-virus packages' with 'antibiotics commonly used in hospitals'.

Fun, but fundamentally broken business idea: "Why don't we make an 'ideas for software' exchange where you can sell your idea without having to build the software!". You publish the idea and then let other people run with it and then they pay you back for that pivotal seed insight later on. Fun idea. But there's a reason Ben Hammersley isn't rolling in cash generated by Lazyweb which is simply that ideas are dirt cheap and people have them all the time. Their value is really, really close to zero. Unless you have real pyaing customers with money in hand waiting for your idea then you're just not adding any significant value by just having the idea. The problem in making money with ideas is in the words making and money not the word ideas.

The idea that you can test the idea before actually building is novel - but unless there's a creative process there - a brebuild phase that adds value and diminishes development cost later, itøs just a waste of valuable lead time.

As a more personal aside - I just don't believe markets build good software, not yet anyway - good software (if it is new in any interesting way) happens best with 3-4 guys in a room who know each other well and have the necessary skills. It's a refreshing idea to create an idea market for this kind of thing but the transaction costs in building software are just too high. They actually dwarf the price of the software itself. That's why you try to build teams small enough that you don't have transaction costs.

Ning is attacking the same kind of problem, which is of equally doubtful value, but at least they're changing the rules where it matters: The price/time to execute the idea.

Who knew? There's a cybernetic scientific principle to back up the "simple software" movement as promoted in words and actions by 37 Signals. The law says that if your system (here, software) is more flexible it will be able to handle more usage scenarios. As discussed all over the place on Signal vs. Noise, simple software is flexible because as you try to apply it to new situations it doesn't have a lot of parts that get in the way.

I like the connection. It also rhymes with basic intuitions from physics and mathematics: The more constraints (i.e. features) you add to a system (of equations), the fewer solutions (i.e. uses) it has.

The Unix Way was bred from this understanding more than 30 years ago. It's saddening that the economics of selling software (mainly Windows + MS Office, but there are other culprits) has led us on such a detour.

So previously I was talking about some of the commonolaties between good innovation practice in general and some of the well tested methods to do agile software design. For the agile software side of that equation I forgot this summary by Alistair Cockburn of what you're supposed to do. I particularly like that Cockburn also has a version of the "leave it alone" suggestion I made with his personal safety point.

The good thing about the practices Cockburn highlight is that none of them cost serious money or time. All it is is healthy direct communication that builds good knowledge - and good products.

I feel blessed to have experienced this kind of work environment over the last couple of years...

[UPDATE: Also Joi Ito chimes in from a completely different angle:

Some of the elements of a cool place is that there isn’t so much of an "authority" but there is a sense of safety.]

Sharing a quick note doesn't get much simpler than this.

Also - congratulations to shortText on just delivering service before asking me for anything.

(link: Morten)

It's obvious isn't it? GMail's very nice compression of threads makes email as efficient and compact as IM.

It's secure. File sharing comes naturally right inside GMail. Images are inlined in GMail messages. 2.6 GB free file storage space. Permanent archive with efficient and simple search. I frequently conduct 30-50 email conversations in groups of 3-5 people in GMail. Feels very much like IM.

That, being said I think Campfire looks nice and fits into the "simple language, all-in-browser" category replacers 37Signals seem to like doing. If I didn't already have a superior free version I might just buy it.

If you don't see flash and are using the Adblock extension in Firefox or if you uninstalled Adblock because you couldn't get flash to work with Adblock, you should use Adblock Plus. It works. The links pointed to on extensionmirror.nl don't work - but the install from the Adblock Plus website does. The original Adblock project has been dormant at least for a year.

I'm back from Amsterdam and the debut of EuroOSCON. I've had fun and broodje haring (which is a "pickled herring hot dog" with raw onion and pickles).

There were good talks and not so good talks and a lot of nice and interesting people.

As a perl hacker it was fun to see all (well, a lot of) the perl hackers on the mailing lists in the flesh and there were plenty of good talks for my tastes anyway.

Overall the vibe was "sharing, simplicity, source" - all the projects were focused on moving on. Tons of projects were in a remake phase rather than a make phase so they were focused on getting it right the second (or third or forth or ...) time around.

Memorable performances in the "it's about the user" category were Ben Goodger's talk on the making of Firefox and Jeff Waugh's talk on Gnome

What impressed me the most was the intense commitment to the social side of software. It's why open source works of course, but it was also top of mind for everyone from perl 6 reimplementers to Linux desktop hackers. Or maybe I just went to those talks were this was top of mind. A conference is after all designed so that you miss 80% of the fun.

I might cover some of the talks on my infrequent hacking addendum (in particular I think I'll expand on the sadness that is perl 6) but here's at least some highlights: The best talk I saw was Autrjius Tang's talk on pugs. I liked that it actually works, that there's heart and courage, vision, friendliness, openness, a complete lack of perl (or any language) bigotry and if you add a nice presentation and the ambition to go on a 10 year hacking pilgrimage a la Paul Erdös I think we have a best of show winner. If I was a benevolent millionaire (I'm not) I would sponsor Autrijus' walkabout in a heartbeat.

The Maker Faire was fun, but as a previous Ars Electronica attendee I have to say I was distinctly underwhelmed by most of the hacks. Each year Ars E presents nothing but hacks thay are way, way superior. That's all fine and dandy this is supposed to be about grassrootsy get involved hack your own stuff like MAKEing, it's not supposed to be perfect - but the secret here of course is that so is Ars E to a large extent.

Couple of good things there though. A couple of projects had vision and heart (not just hacking) in mind. The nodel project is a collection of tools for building an open voluntary people/locations/events semantic web. Fernando Botelho was interested in building cheap computers for the blind from open source software. It's more af a call for action than an existing project but there's scope and heart there.

Other than that, the most admired demo was Beth Goza's tour through Second Life - an MMORPG that has two to three unique enabling features: There's a free basic account if you want to look around. You can script the environment yourself. The interactions are social and not hack&slay. It's simply not about killing people.

I quite liked a demo that wasn't really part of the faire but just an impromtu demo by Liz Turner of her ICONAUT a newsmeme analysis tool that slices and dices news keywords with isometric iconography. It's either informing and eye-opening or just eye-opening, but it's certainly that.

(more on Technorati: tag and just search)

It seems Larry McVoy of BitMover is either a complete asshole or just your run of the mill socially inept paranoid hacker. He is actively reaching out to customers to have their employees stop working on competing open source to bitkeeper. These people aren't BitKeeper developers, they're end users. It's like if Microsoft tried preventing Word users from contributing source to Open Office. Simply, insane. Lesson learned: Avoid BitKeeper at all costs.

For those keeping track, Rollyo may be the first ever commercialization of currying as something cool. Currying is the process of taking a function

f(arg1, arg2, arg3, arg4)and defining the function f' as

f'(x) = f(x, arg2, arg3, arg4)

So a function of 4 arguments is used to generate a function of one argument (the number 4 is arbitrary and not part of the definition).

Rollyo does exactly that with Yahoo search operands. The 'arg2, arg3, arg4 part in this case consists of site:someurl localizations of search. What Rollyo adds on top is a nice interface to define the curried function and celebrity search curriers.

I didn't even know that Debra Messing could curry.

I hadn't seen the Google Feed Reader before today, it started cropping up in my referrer log. GMail-like, with what appears to be a too heavy interface for it's own good. It's quite slow at present and not immediatelty useful. But, like GMail, the choices made for how to browse feeds may make sense in the end.

(About not seeing it before: It's new.)

The otherwise excellent Firefox plugin Slogger has a serious flaw in that logging a page that you got as the result of a POST request repeats the request. Thinking in RESTian terms, you're only supposed to consider results of GET requests static redoable and cacheable, not POST requests. If you're autologging something important you might get disappointing results after purchasing that book/deleting that DB records and so on and so on. This is a similar discussion as the one over Google Web Accelerator, but more serious, since this also involves POSTs and not just ill-considered GETs.

I'll update this post if someone posts a fix to the Slogger mailing list.

OK, now that we've established that surface matters and language matters is it ok to dissect game changer hopefuls like Ning?

It looks to me like a well done, well integrated advanced web panel for a well equipped PHP hosting solution. What that means is: You use the webbrowser to configure and manage your hosted application. You can rely on certain pluggable elements that will fit right into your data (e.g. user login) but other than that you're writing PHP agains a set of compatible elements accessing e.g. all the open social services out there.

A server with a full CPAN, a web interface to my CGI directory and something like Catalyst would seem to do almost the same trick. Or the equivalent based on Rails.

But of course execution is everything and ideas nothing in this particular case. Looking forward to testing this - even if i do have to learn php.

Over on Signal to Noise there's a discussion of the speed with which writeboard fixed the obvious blunder of not locking documents.

The obvious discussion gets started: Was this held back to have some good news to offer real fast, or is this really a rapid release cycle. Some think so, some dismiss that as rampant conspiracy theory. It's impossible to tell of course, but it's worthwhile to mention that Jason Fried suggested doing exactly that (holding back features) as a marketing strategy in his talk at Reboot. Of course he didn't suggest that you should tell people another story in public. Personally I'm not sure I like the suggestion either way.

Also: The whole "no public betas" discussion seems like religion over fact to me. In both schools (i.e. Google vs. 37Signals) products get releases with plenty of flaws and shortcomings. In both cases your best bet is to hope that they're working hard to improve the product. As far as I'm concerned I derive no comfort from having the beta sticker removed when I still have to expect bugs and feature changes/additions as a part of life with the product.

Since we're talking about wikis (even ones that won't admit they are secretly wikis, you'd think they were afraid to be thrown out of the country if the secretly admitted to being open community software) I looked around to see if it was really true that there weren't any good hosted wiki services around.

There's plenty of hosting plans including wikis, but there's also Schtuff, which is free, simple, has history (with differencing in the same style as writeboard), access control, search, backups, and much more.

They could do with a fresher look and bigger fonts, but other than that it looks really nice. Bookmarked.

Other free options are here.

Good post by Rick Segal on Writeboard and the response from geeks like myself. Language matters and "Yes. This is just a one page wiki with an edit history and a forced login to view/edit.", my description of Writeboard which works for me, does not work for everyone. So what's the invention in Writeboard? Certainly nothing in the functionality of the application which really is plain old wiki with absolutely nothing new. It's not even simplified in any way. Even Ward Cunninghams ur-wiki had all the features of writeboard, with the same stunning simplicity.

The language however is clean and maybe new. The product name and domain name, immediately descriptive and comprehensible, and the language used in the application, without cutesy tech words (wiki) that mom wouldn't know the meaning of. I'll buy an argument that this really matters. (The notion of a new product which has no novelty, except in language, is intriguing. Can user centered design really be boiled down to pure language?)

The new language does however have a bad sound of 'consumer' ringing through my years. My mom won't mind at all. She is a consumer when it comes to tech. For 2-way conversation types like myself it's kind of offputting though, so I'll take my wiki'ing elsewhere.

37Signals' Writeboard is trying to derive some buzz off the recent interest in collaborative web editing, e.g. JotSpot Live or Writely. But Writeboard is in fact nothing new, but simply a 37Signals branded version of ... the wiki!

Yes. This is just a one page wiki with an edit history and a forced login to view/edit. There's no fancy rich text editing, it's plain old wiki-formatting once again. There are no collaboration features except what was already the case with a plain old wiki (reload the page to see other people's recent edits). It has less features than almost any wiki package I can think of. There are plenty of hosted wikis (or just look here).

Free is always nice, but this must be seen as a pure marketing effort to drive attention and interest to the products of 37Signals. 37Signals is of course "the Apple of simple web applications", so I'm sure they can manage to get product reviews out of this (oh wait, they have)

Been trying out the new Jotlive, a kind of subethaedit in the browser with AJAX, and it works - most of the time. There's outlining, complete with eye opening live drag and drop, it's visible what the others are doing, including the outline drag and drop. This is true AJAX eye-candy, and I think it will be useful too, although I've been using it for too short a time to actually prove that point.

Caveat: It seems to be a little buggy still with occasional disconnects and (urgh) loss of data, but if this is possible in the browser you really need good desktop apps to beat Web 2.0 applications.

What's also cool about it is the story of how it came about:

"The idea from JotSpot Live came from those two lines of thought: fine grained editing and locking combined with live updates. I had a chance to try implementing the idea at our hackathon a little while later, and (surprisingly) it seemed to work! "

WSJ is running a story on a complete redesign of Windows, supposedly in Vista

While Windows itself couldn't be a single module -- it had too many functions for that -- it could be designed so that Microsoft could easily plug in or pull out new features without disrupting the whole system. That was a cornerstone of a plan Messrs. Srivastava and Valentine proposed to their boss, Mr. Allchin.

Let's either

a): Assume it should have been so that anyone could easily plug in or pull out new features

or

b): Start hiring lawyers.

It's not just AJAX library snippets in ASP.NET, now Microsoft is also including an MPI library with a BSD license with their compute server product. Rampant communism! This software not only has a communist license but turns entire armies of machines into hard working slaves!

(lest we forget: There's also the use of Lucene.NET in Lookout - but that was more of an accident)

(oh, and FTP - BSD licenses all - so nothing sinister going on, just a nice counterpoint to the insane rhetoric against open source)

The new Office 12 interface will be something completely else. What this means of course is that organizations will have to expend huge amounts on training users to the new interface. Why not spend that money training them to use open software like OpenOffice instead? Note to office integration vendors: Do you think your existing Office integration will work seamlessly with a completely renewed office interface? No?

It's nice to see Microsoft embracing the enormous speedup in productivity inherent in code sharing and open source. That's what one thinks as Microsoft's Atlas framework for AJAX web development, while still unfinished, is already borrowing idiom and maybe even code from the already established open source solutions out there. Can't wait for the patent applications to start flowing from this "investment in innovation".

The license for the demo applications for Atlas carries a good clause that should be added to all open source licenses pronto:

(C) If you begin patent litigation against Microsoft over patents that you think may apply to the software (including a cross-claim or counterclaim in a lawsuit), your license to the software ends automatically.

Again, nice to see Microsoft apply the same kind of nuclear war license tactics that, when found in open source, are considered "communist".

Jon Udell posts about an experiment in cookie development, pitching cookie emulations of three common work paradigms in software against each other. The the glee of commercial software types, the traditional managed approach won. This is entirely unsurprising, but not for any of the reasons Udell gives.

From the description given, it's unclear whether staffing was the same on all projects, but even if it were there's no surprise in that the managed team came out ahead. What they were doing was delivering one take on one product - no second generation to consider, no bugs to fix. I don't know of any argument why this would be faster with open source.

Secondly, all the succesful open source projects have a 'star manager' (in the parlance of Udells post). Linux has Linus, Python has Guido, Perl has Larry, Firefox has Goodger and Ross. The list goes on. It's unclear whether this was the case for the cookie project, but if not I can't think of any good reason why open source by committee would be any better than any other software designed by committee. I am reminded of Jimbo Wales' statement about Wikipedia at Reboot: It doesn't work because it's a reputation system, but because it's a reputation culture. There's always someone there to command natural respect.

The reasons given, while obviously not without merit for the case of Linux (there was plenty of inspiration around) just fail completely for other categories of software. Apache was a semi-early entry in its category and was based on the equally open NCSA HTTP daemon. Not first, but still quite early. Python/Perl/Ruby had no obvious predecessors. In short, the reasoning that open source can't lead but only follow seems entirely bogus, based on examples defined by succesful commercial software. Obviously in these cases, open source can't win.

Best post in a while from from Just: Your PC is a tamagotchi. And he's right. Who wants one of those, really?

You can do a lot on the server of course, but apparently, you can even test the UI itself. Automated. Cross platform (browser + OS). Nice.

The only thing I'm left thinking is this: Wouldn't the test itself tend to work exactly when the browser is well behaved and therefore provide to many positive test results?

Just as I was prototyping my own web framework (frameworks are like CMS's everybody wants their own) making webprogramming simpler for personal projects, I hear I have to investigate Catalyst as an attempt at this. Let's hope it installs better than Maypole did* (never got that to run on Windows) and that it can gain some momentum.

Sofar I'm liking it - template engine is the Template Toolkit, model uses Class::DBI - just what I was considering up front.

I was going to call mine pails, as a joke and to indicate a tool to pump water off the sinking ship that is perl. Between Python, Ruby and PHP there's a lot of competition in the scripted world.

* [UPDATE: It did. Straight off CPAN, requiring no crazy modules. No default usable cgi script for use under mod_perl2 though]

He didn’t bother to tell me what it does, and remember, I only really started looking at Ruby last week, but it’s obvious

A parable: I recently returned from vacationing in Italy. I don't speak a word of Italian, but I was pretty much able to read all the signs (All 10$ words come from latin. I like 10$ words). I still don't speak a word of Italian.

Tim Bray's example reminds a little of this. I know and use a multitude of languages and I realize ruby isn't far from some of them. The example Bray quotes is really easy to make out. But it's almost as if the proximity of ruby to e.g. perl is working against me, for much the same reason I never bothered to learn proper Swedish.

Excellent post on structure vs. data, the soundbite is

Data First strategies have higher usability efficiency (all rest being equal) than Structure First strategies.- which means nothing but the following: Structure is unnatural for us, it must be learned and until we learn we are challenged by structure. Data, more naturally, is just language and we've been wired for that for 40000 years.

On the other hand as the post notes, structure works. If you can hide the structure and use it then structure is very efficient.

The only problem wth the last assertion, and we're learning that during the current "remix everything" paradigm and the emergence of the hypercomplex society, is that we quite simply can't keep up - and that the structural efficiencies that we're used to are too expensive to be valuable when the structure we apply them to are as volatile as structure is in our highly mutable digital society.

This is also the reason why microformats have been so hugely successful and why the semantic web, old style, is unlikely to succeed in the near future.

I'm torn on the Hitting The High Notes thing. Clearly good programmers are qualitatively better than mediocre programmers. They solve other problems than the mediocre ones and they're much better at solving the right problem.

It's got a lot to do with the "Never solve the problem as stated" rule. It is almost never the task to just do what you're told. That's what mediocre people do (if you're lucky), but the reality is that you need people who will help you solve netirely different problems than the one you stated.

On the other hand, I think the cult of excellence is frequently wrong. It's much more important to simply be than it is to be excellent. And I think most software proves that point on a daily basis. We're using what's there - we're not waiting for the very best fix to our particular problem. Being there simply rocks.

More on bootstrapping: Technorati was started on 3 weekends of hacking.

What the hell am I doing with my free time? I've got to get my Gmail organizr hack done...

In the same week Google Earth is (re)launched as a completely free version of Keyhole, Google adapts to the intense interest in Google Maps and publishes an API. Bloody excellent.

I can't be fun competing with that.

It is interesting how much easier it is for Google to launch all this stuff without a public backlash because there is absolutely no question of monopoly for search (nobody has one) and also because Google behaves so very well interop-wise (free and available API's for anyone to tinker with). Yahoo also gets it. Amazon also gets it. Microsoft only partially gets it and Apple, despite having an extremely hackable platform, doesn't get it at all in terms of communication and the data services Apple also offers.

It's no wonder, given the fact that the designers of Java completely botched the implementation of generics, that sentiment along the lines of Generics Considered Harmful begins to appear. But generics aren't harmful. They just need to be done properly, used properly and tooled properly. It is quite possible that no programmer has ever been in a situation that all of these preconditions were met.

The generics in C# and java are just badly done. Pure and simple. Too much work left for the developer, and not nearly enough reliance on the compiler. This takes away the breathtaking flexibility of C++ generics, but still adds the complexity.

That's the "done properly" part. Clearly, even C++ gets it partially wrong, but mostly in ways that you could tool your way out of. As for "used properly" - obviously you can do horrible things with templates. If you use it for it's basic usefulness - which is to provide the equivalent of all the useful helper words and verb and noun modifiers from natural language - then generics work just great. And not having them, leaves you with the alternative of a rich external build environment - you need code generators to not go insane.

Tooling is abysmal in all cases I have seen. The compilers I've used are just to slow. They expose the complexity of template definitions to unwitting template users (a horrible, horrible problem - you should never have to know the insides of a template you use) and fail in other ways to tool templates properly. I've made more comprehensive notes here.

Microsoft's plans to "extend" (that MS speak for break) the RSS standard, as reported here.

Just wanted to have a post to reference back to, when Microsoft's patent application for RSS appears in a few years.

The bright new world of weakly typed, hackable web services also hold new perils. Google just switched GMail from using the domain gmail.google.com to mail.google.com - and at least the GMail power tweaks I'm using just blew up.

[UPDATE: What really seems to break everything is in fact the path part of the url: It switched from being rooted at /gmail to being rooted at /mail]

I'm betting tons of people's little compiled micro-applications just blew up too. That'll teach you to use static resources to bind to anything as dynamic as a web site.

I wonder how long it will take before companies start achieving notoriety when they break 3rd party hacks for websites. Clearly they could claim that their service wasn't intended for hacking, but according to Microsoft old timers (and from reports also according to the leaked source of some time ago) Microsoft, of compatibility breaking notoriety, could actually claim the same thing. The main problem on Windows was always use of undocumented calls. Again: This is according to reports.

When will Google start feeling Microsoft's pain?

Det er blevet tid til at efterrationalisere lidt mere på Reboot end bare ved at referere foredrag - og bare for at holde fast i den globaliserede virkelighed vil jeg gøre det på dansk, selvom det er helt ligemeget at det er på dansk.

Om få år er det jo alligevel ligemeget. Og hey, som alle ved, blogger man jo mest for sin egen skyld.

David Axmarks foredrag om open source havde en vigtig pointe:

Open Source is brilliant at getting the little things right

Det blev sagt i sammenhæng med den sædvanlige anklage at open source ikke gennemfører de store innovationer. Axmark brydede sig af at man til gengæld gør det små ting rigtigt. Han har ret og der er en rent økonomisk forklaring på det:

Langt det meste closed source software bliver skrevet til ét projekt. Dvs langt de fleste kodelinier kun har ét projekt til at finansiere deres udvikling. Det sætter en ganske lav grænse ved hvor mange penge der er til at lave den enkelte linie kode korrekt og nyttig i sammenhængen.

Open source har den gigantiske fordel at koden bliver genbrugt og genbrugt og genbrugt. Der er tonsvis at projekter til at finansiere den enkelte kodelinie og derfor bliver den bedre samtidig med at det enkelte projekts prisbidrag til koden falder mod nul.

Spørgsmålet er om man ikke kan lave en rationel økomisk model af open source ud fra den tankegang hvor prisen 0 simpelthen kommer ud som 'naturlig'.

I always found the 20-way drop down with language pairs ("English to French, Portuguese to English" and so on) on web translation sites annoying. The proper thing for these services to do is to detect the language of the page you want to translate on their own and just show it in english already - an expert interface could be a button away, but simple things ("this page to English") should be simple.

I've made a bookmarklet (and a perl script) that does exactly this. It loads a webpage, tries to guess the language used, if Google translate supports it it is then translated and you're done.

Drag it to your toolbar. The usual Javascript bookmarklet security caveats apply. The code will know about pages you load, and clicking on the link will let me send you anywhere. But I don't.

Don't abuse it please, or I'll have to take it down.

The underlying language categorizer is TextCat. This service works no better than TextCat does.

[UPDATE: This actually works directly on Google]

[Update: Better version over here, that stores the searches serverside so this works from multiple machines]

God bless Greasemonkey. Now I can have stored searches in GMail implemented as a Greasemonkey user script. Justerens comment was on the nose "Isn't that what filters and labels are for?". It is - but filters only applies to new email - and spamfiltering overrides filters. I have consistent problems with specific kinds of ham, that I actually have rules to pick up, that ends up filtered as spam.

Google has taken the '00s concept of open source code bounties to new heights. This summer Google will sponsor, a large number of of open source projects, offering a bounty of 4500$ for up to 200 participating developers - if they complete their designated project.

Projects that take on developers will also receive a smaller sum to help in managing the summer coders.

Maxing out on the developer limit, the project will amount to a 1 mio$ contribution to open source.

The companies that sponsor/employ the important members of the large open source projects probably contribute more money than this - but even if this is mainly good advertising it's still a huge deal for the projects that benefit.

[Update: It's x86]

Can't say I knew before I read the infoworld story but the huge fuss (also on slashdot) over Apple's supposed switch to Intel seems to be completely unaware that OS X already runs on x86. There are obvious commercial reasons not to go to a highly clonable platform, but technically Apple seems to be well along the way already.

Sigh. The Firefoxians must really, really hate me. Not only did the install completely fuck up. They broke "open everything in tabs, not in new windows" again. I absolutely really, really hate this. I've tried the usual settings but nothing works. Could someone please remind me what secret hack I'm forgetting when I want nothing at all to open any new stinking windows, except my own personal self going to the file menu and asking "Open a new window".

Found it.

Dansk fortsættelse: Oversætterne af Firefox er helt sikker velmenende, men for helvede for nogen kedelige, dårligt skrivende geeks. På engelsk står der mundret "Tabbed browsing" i settings. På dansk står der "Fanebladsbaseret internetsurfing". Det hjælper jo ikke at applikationen er oversat til dansk når det sprog den er oversat til er fuldstændig rædselsfuldt. Det er dårlig, dårlig "Jusabilligti".

I absolutely buy the part of Joe Kraus' Hackathon post that says that short focused bursts with a focus on actually shipping is how the really good stuff gets done. The problem with hackathons is that they are not, in my experience, truly sustainable, reproducible events.

My personal experience with Hackathons come from doing maybe 10 of them, either alone or with 1-2 co workers. This has been possible where I've worked due to trusting or hands off management - either way works if you have geeks of quality on your staff.

Management of that kind is a prerequisite, but it is not how hackathons get done: You need an itch to scratch. Good ways to find itches are "stuff is cool", "stuff is really, really late and really, really necessary" or just that you genuinely have an itch to scratch.

The problem with hackathons is that if you try to run a hackathon as a "process" without that itch, you'll get nowhere fast. A day off the map with less than 100% motivation is just a day off the map. I have tried, and I have seen colleagues try, to falter because the itch we felt wasn't genuine, which meant that the hackathon wasn't the focused energy boost it's supposed to be, but just a day with an undefined task and a tight deadline.

But when it's good it's good. I think all the really essential core ideas in our product has come out of sessions like hackatons.

The search intensity coloring on Google search history is not graded for power users...

So the automation of the tag hack is done, tags followed here. Suggestions were followed, so tags are now named tag_ something not deli_ something. Updated daily (which might be a too low frequency for some of these tags). I'll clean this up, autodetect tags from RSS feed links and share the tracker script in a little while.

But just as I finished I saw the error of my ways: I should have just made an XSLT of the RSS feed, and let Google crawl the result of that. That would be autoupdated and google would scrape it for me. Must fix.

Major news in Longhorn: A Red Screen of Death. Is that a communist Red Screen of Death or a republican Red Screen of Death I wonder?

Or is it quite simply a Masque of Red Death?

As previously mentioned I wasn't impressed by backpack. Other people are, mainly because of the email integration. To me that feature is much better when just emulated with GMail. What you get is a responsive AJAX interface, spam and virus filtering, lightning fast search, tons of storage and a price tag of $0 per year for as many pages as you like as long as you stay under 2GB of total storage.

Here's what you do - it's just 2 easy steps

- Create a label on your GMail account called e.g. gpack

- Create a filter for email to you+gpack+securitytoken@gmail.com - set label gpack, remove from inbox

"But GMail doesn't provide public permalinks to messages", I hear you cry.

This is why I'm working on GPack, a miniature email backed wiki implementation. It support textile formatting and automated updates when your GPack is updated via email. It'll be done real soon.

Incident to the previous post, you might enjoy Larry Wall interviewed here on perl as a glue language and perl people as glue people (no it's not a glue-sniffing pun), multi paradigm aware integrators of things.

As I thought about that it struck me how very apt the term 'glue language' is in the case of perl. Often when you're trying to stick things together with glue you find that the glue ends up sticking to you instead in a big mess. Perl does that sometimes.

While I can see the point of Basecamp ("The simplest thing that could possibly work" for project management), Backpack seems utterly pointless. It's an "almost wiki" where each wikipage consists of multiple data items, combined with a TODO list. Pages have access controls and there's a simple email=>wiki update feature where you can do an edit to a wiki page by sending an email.

Augmented wikis are done better elsewhere.

Parts of the application simply shouldn't have been released, since they are clearly not done yet. The "email this page" feature is horribly implemented. First of all - to email a page you have to use a special "email key" as the address. Having a hard to remember email address to keep track of outside your personal organiser application kind of defeats the purpose of an organiser, no? There are simpler and better ways to handle the security issues of email, e.g. the way JotSpot does the it. And when you do send an email to a page, what you get is an embedded subject line, which links to a page with this inviting look. Incidentally, I did not set up this page to be public. Access control just isn't working yet for embedded email.

There's no easy to find search for your data. I thought GMail had made that mandatory for this kind of application.

The most impressive thing is the hype/content ratio on this project - almost enterprise grade. The hypefest here really tested my gag reflex.

But the worst thing is actually this: I don't think the 37 signallers realize they just created a "me too" product of the worst kind. There is nothing new here that isn't in several open source packages and/or one of the other social software products. No convincing extra simplicity, no fresh new UI ideas.

To check the Google tag hacking idea, I've created a script to generate tag search tokens from del.icio.us RSS feeds. I plan to run this nightly on many different tags, but for now i've just done one run on the tag googlehacks. The generated page is here.

Feel free to mirror a copy and link to that and/or my copy. The more the merrier in getting some momentum for the tag search google bomb. When I get the full setup done with nightly updates, I'll share the script to generate the html, not that it's in any way complicated.

The tags I use consist of the prefix 'deli_' in front of the tagname. Tags with non alphanumeric characters are mangled (all non alphanumerics replaced by _).

I've been thinking about whether this might get me accused of link farming, but I wouldn't think so. This isn't farming. I actually don't want the pages of links themselves to be popular. I am only interested in injecting search tokens in the searchable text set for other pages. For people uninterested in these made up terms (currently leading to empty searches) this shouldn't be an issue. The search tokens won't crop up in result lists, won't taint any search for "real" search terms and I wouldn't expect them to affect page rank either.

The GMail filesystem - an actual, mountable Linux filesystem stored on GMail.

This is less surprising than it sounds, because good GMail libraries exist and good "File System on Anything" libraries exist, but it's still a very cool hack and it underlines some of the important points that are anti-software patent and pro open source.

- It is not only hard, but impossible to predict the uses of technology you choose to share.

- It is not only hard, but impossible for you to realize the full potential value of a software invention you make

- Arthur C. Clarke famously remarked that "Any sufficiently advanced technology is indistinguishable from magic". Something close to the converse is true for software - "Even magic is just made of fancy combinations of technology". If you want magic, you should support and encourage rapid and widespread combinations.

It's beginning to look like the only thing protecting the desktop from irrelevance is the lack of a widely deployed easy to use infrastructure for private URL spaces. What's changing the desktop from enabler to encumbrance is our poor ability to integrate our local data with the many web service providers that are quietly starting to dominate the attention landscape. The ability of data to integrate into these services is beginning to dominate all other uses of data.

This struck home with me as Infoworld gave up on homegrown taxonomies and started just using del.icio.us instead. The thinking used to be that providing structure was what a content provider did, but now Infoworld is turning that model on it's head and just trusting the common taxonomy instead.

With any luck this will also mark the beginning of the end of the ridiculous notions of deep links and front pages. Clearly, when Infoworld gives up owning the taxonomy, each Infoworld page has to survive on it's own merits, independently of any site map Infoworld has canonized.

I am aware that this may seem like old news to many readers, and I agree that it is, conceptually. As a practical problem however, it is only now becoming a huge problem as the variety of services available to augment your data just keeps growing. It's also worth noting that so far SOAP has had nothing to do with this new services world.

Apartments listed on Google Maps

People have started annotating Google Maps satellite images - sadly not on Google Maps itself, but rather on Flickr using exported images.

myGmaps provides a direct Google Maps annotation system. I can't imagine this service will not be shut down as they are effectively stripping all Google content except the map itself.

Also, people are sightseeing on Google Maps.

GMail is being used as an online back system.

Greasemonkey simplifies and automates end-user customizations of websites (for Mozilla users only). This is a good thing as we recall from the allmusic redesign scandal.

Yahoo's term extraction service has a nice interplay with Technorati.

Embracing user defined extensions of your service can be a powerful thing...

The Starter Edition is a simplified version of Windows XP, oriented at users who have never had a computer or have little computer experience. It can open only three programs at the same time, with a maximum of three windows for each program and cannot connect to computer networks.This crippled version of Windows is intended as a "third world Windows" to combat Linux adoption by 3rd world goverment programs. So the poor are only entitled to crippled computer systems? That Microsoft would consider this a good option, and possibly good PR is beyond me. Why any government would even consider this option is also beyond me. The restrictions are arbitrary and purely commercial and smack of discrimination - and from a purely technical point of view, I would guess that they limit the usability of the system to such an extent that a well configured Linux box would actually be a better buy for poor, computer illiterate users.

Keep them flash files loading, Loading, loading loading - Rawhide!

Good find by Just

"Darcs is a revision control system. [...] based on a "theory of patches" with roots in quantum mechanics. "

Utterly cool. Possibly too cool.

I am slightly unimpressed with A9's Opensearch. A standard protocol for publishing search interfaces is a good idea. Whether basing it on RSS 2.0 is a good idea remains to be seen - but at least something somebody calls RSS is widely deployed and it is also extensible so that a search engine may extend the metadata published in search results.

What is decidedly underwhelming is the Opensearch aggregator A9. Seeing the data overkill of a 5-way multi-search aggregated into an A9 user profile brings me back to pre-google days when everybody, mistakenly, thought the problem was finding the right data. That's not the problem. It's not finding all the junk that's hard. A9 is like a browser based version of those desktop super searchers that were popular back in the 90s. And like those tools it is quite simply solving the wrong problem.

The next question then is what the right problem for Opensearch is if it is not the Opensearch aggregator. Personally I think the Opensearch search profiles will be extended with some kind of search profile indicating the grammar of searchable assertions (e.g. a specification telling me that I can search a particular database for the address of post offices based on postal codes). My search for post offices will then lead to this search profile and I will be able to use it. It will be sort of a weakly linked version of the semantic web. The inly version of the semantic web that could ever work.

An overlooked aspect of trackbacks is that as a trackbacker you also get an inderect traffic measurement for the trackbackee. If we assume some kind of fixed clickthrough rate for the links you are able to plant elsewhere, then your referrer log gives you a good indication of the traffic to URL's you've trackbacked.

All this to say, that judging from my referrer log, Joe Kraus' long tail post and Adam Rifkin's microformats post are getting read a lot.

Kraus' post is brand new and interesting, so no wonder there, but Rifkin's post seems very consistent traffic wise.

It's no surprise that Microsoft loves Groove Networks and its product. It's closed source. It's closed data. It's locked to Windows. But all of these properties also mean that Groove is just so old fashioned. I'm not saying the product isn't viable - in fact the few times I've used it it's been pretty neat - but I can't help but wonder how many companies wouldn't rather just use Basecamp for data sharing and project tracking and Skype for voice along side it.

If you want more control there's something like Jot.

For a whole lot of people it's not really important for these applications to be integrated in to one closed data universe. Rather, that's a huge disadvantage. And it is obviously easier to integrate an open enterprise (i.e. one where company, customers and subcontractors talk to each other across technical and corporate boundaries).

When Groove started, these easy to use almost-free collaborative spaces online didn't exist, but now they do and that makes it hard for me to see why Groove will remain interesting.

The google map hack still takes more effort than it should in the final product (I'm hoping that Google doesn't try actively to fend these annotations off but realize the immense value in them), but there's a better writeup than any I have seen on engadget.

Applications like GMail and Google Maps are supposedly called Ajax applications - Asynchronous JavaScript and XML. While it's nice for users, it is not necessarily cleaner designwise (sorry couldn't resist).

The (currently) last comment on this post is good:

Reading around this it seems there is a more away from LAMP and towards Ruby on Rails rather than Perl or PHP; BSD rather than Linux and maybe lighttpd rather than apache, MySQL seems to be the constant.I don't know why the direct translation BLMR (as in late) is so bad, though.

So rather than LAMP try LMBR, pronounced limber to coin a new acronym.

Earlier I wrote about a 1991 Microsoft memo

[...]this was back when printing was a difficulty[...]

You have to give credit where it's due. Microsoft made printing easy. I was reminded of this when surfing by Jon Udell's OS X printing horror story.

The many GBrowser rumours just got a new shot of fuel, with the announcment that Mozilla Firefox lead developer Ben Goodger now works for Google.

According to item 4 of this Google seminar writeup nobody uses the "I'm feeling lucky" button. Must be because they don't know how. "I'm feeling lucky" is absolute essential to navigate websites with crummy site navigation and/or search facilities.

What you do is create an "I'm feeling lucky" search shortcut (in mozilla firefox obviously - you have switched haven't you?) using the site:crummysearch.org search parameter (or alternatively the inurl parameter) to restrict to the poor site in question. Since Pagerank works so much better than most website ranking algorithms this actally works much better than the search page on the website itself. You usually get there first go. Good cases where it works are (at least) wikipedia, IMDB and allmusic.com.

Your average website (including this one) has surprisingly crummy search facilities. It's one thing that they're slow - but the second thing is that people have typically done a premature optimization and indexed the content in the database instead of indexing the shown pages. For this weblog, for instance, that means that page comments don't get indexed along with their posts. This is a very common phenomenon. It is particularly annoying when people misunderstand the web and do a full robot block via robots.txt (e.g. all Danish newspapers do this). Their own search facilities are no match to Google's - which means their sites have about 1/10th the usability they could have if they were Google indexed.

Meanwhile, we're waiting for "Google Tags" - useful stored public searches, available at urls like this: http://tags.google.com/myspecifictag - Maybe I should just make a service like that here on classy.dk. Obviously, there are resources like the The Google Hacking Database, but they don't have live queries...

Ha! The irony is thick here at classy.dk today. It was only a few hours ago that I wrote that I had yet to see a malicious Moveable Type plugin, so that the lack of a security model in software like MT was not yet a problem. And now that I'm catching up on my K5 I learn that actually a free weblogging service was actually used maliciously just recently. It's not quite an attack via server side software, being a case of bad HTML filtering in comments instead, but it strikes awful close.

If you develop anything for the web, or even if you're just a user at the geeky end of the user scale you should read Adam Rifkin's aggregated take on weblications, Link's from that post will keep you busy for a long time. Of particular interest The Web Way, because it links to so many other cool places. Of more particular interest, this presentation on 'the lowercase semantic web' which is a new moniker to describe all those metadata enhancements that are gaining popularity because of the popularity of blogging. Blogging has created a market for smarter clients (sometimes just neat plugins for firefox or similar) able to extract useful data from DHTML, meta tags and link rel attributes and that in turn breeds these kinds of new micro standards.

You probably want to read this before enjoying the etech slide show.

Actually, what all this metadata tells me is that all of the new competing "closed source but free" desktop search engines coming out from all the "We're the platform"-contenders are failures in the making. There's so much metadata in the web you browse everyday and none of the desktop tools are ready to aggregate that metadata for you in a useful way. Nor will they ever be.